Phase 1: The Starting Point — DigitalOcean Hosting

Infrastructure Overview

Customer initial production environment was hosted on DigitalOcean Droplets — Linux-based virtual machines running the application directly on the host OS. While DigitalOcean offered simplicity and low initial cost, this setup quickly revealed significant limitations as customer scaled its client operations.

Challenges Encountered

Operational Bottlenecks

- Client feedback highlighted noticeable delays in receiving critical application updates, raising concerns about responsiveness and SLA adherence.

- Latency issues were observed in application response times and data loading, directly impacting end-user satisfaction.

Infrastructure Rigidity

- DigitalOcean supports only Linux-based environments, limiting the flexibility needed for applications that may require a Windows-compatible runtime.

- Container orchestration capabilities were absent, making it difficult to manage application dependencies consistently across environments.

Manual Deployment Burden

- Deployments relied entirely on manual scripts and custom tooling.

- Each release cycle required 1–2 engineers dedicating 6–7 hours per week solely for deployment oversight and maintenance.

- This manual approach introduced a high risk of human error and created deployment inconsistencies across environments.

Phase 2: Migration to AWS EC2

Why EC2?

As a first step toward modernization, Cogniv Technologies recommended migrating the customer workload to AWS EC2 (Elastic Compute Cloud). This move provided our customer with enterprise-grade infrastructure, deeper managed service integrations, and the foundation needed to adopt containerization in the next phase.

What Changed

- The application was rehosted on EC2 instances within a properly architected AWS VPC, with security groups, subnets, and a NAT Gateway enforcing network isolation and security best practices.

- AWS RDS for MySQL replaced the self-managed database, offloading patching, backup automation, and storage scaling to AWS — allowing the team to focus on application development rather than database administration.

- AWS CloudTrail was enabled to capture a full audit trail of API activity across the account, supporting compliance and operational governance from day one.

- Amazon CloudWatch was configured for centralized logging, metrics collection, and alerting across all services.

- AWS Route 53 was used for DNS management, enabling reliable domain routing and health-check-based failover.

Improvements Gained

- Eliminated DigitalOcean’s Linux-only platform constraint, opening the path to broader runtime support.

- Gained access to the full AWS service ecosystem for future integrations.

- Centralized monitoring and auditing replaced fragmented, manual log management.

- Database reliability improved significantly with RDS automated backups and multi-AZ capability.

Remaining Gaps

While the EC2 migration addressed infrastructure maturity, several challenges persisted:

- Applications still ran directly on EC2 instances without containerization, meaning environment inconsistencies between development and production remained.

- Deployment was still largely manual, continuing to consume significant engineering time.

- Scaling required manual intervention or basic Auto Scaling configurations, without fine-grained container-level resource management.

Phase 3: Containerization with Docker on EC2

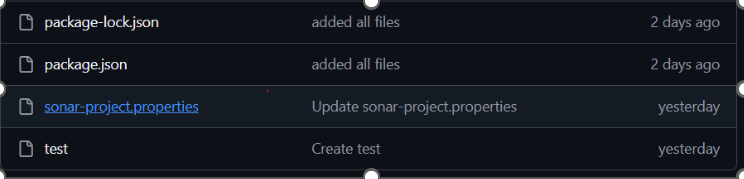

Adopting Docker

To address environment consistency and dependency management, Cogniv Technologies led the effort to containerize the customer application using Docker. All application components were packaged into Docker images, with container images stored securely in Amazon ECR (Elastic Container Registry).

What Changed

- Application workloads were containerized and deployed as Docker containers running on EC2 instances.

- Amazon ECR served as the private image registry, replacing any ad-hoc image storage and ensuring version-controlled, immutable container artifacts.

- Docker Compose was used to manage multi-container deployments locally and in staging environments, improving developer workflow consistency.

Benefits Unlocked

- Environment Parity: Containers encapsulate all application dependencies, eliminating the classic “works on my machine” problem across development, staging, and production.

- Faster Onboarding: New engineers could spin up the full application stack locally using a single Docker command.

- Image Versioning: ECR enabled strict version control of container images, making rollbacks straightforward.

Remaining Challenges

Despite containerization benefits, managing Docker containers on EC2 still required:

- Manual EC2 instance management — patching, capacity planning, and instance health monitoring.

- No native service discovery, making inter-container communication in a microservices setup cumbersome.

- Scaling containers still required EC2-level intervention, not container-level auto-scaling.

- The deployment process, though improved, still lacked full automation and required engineer oversight.

Phase 4: Full Modernization — AWS ECS Fargate with CI/CD Automation

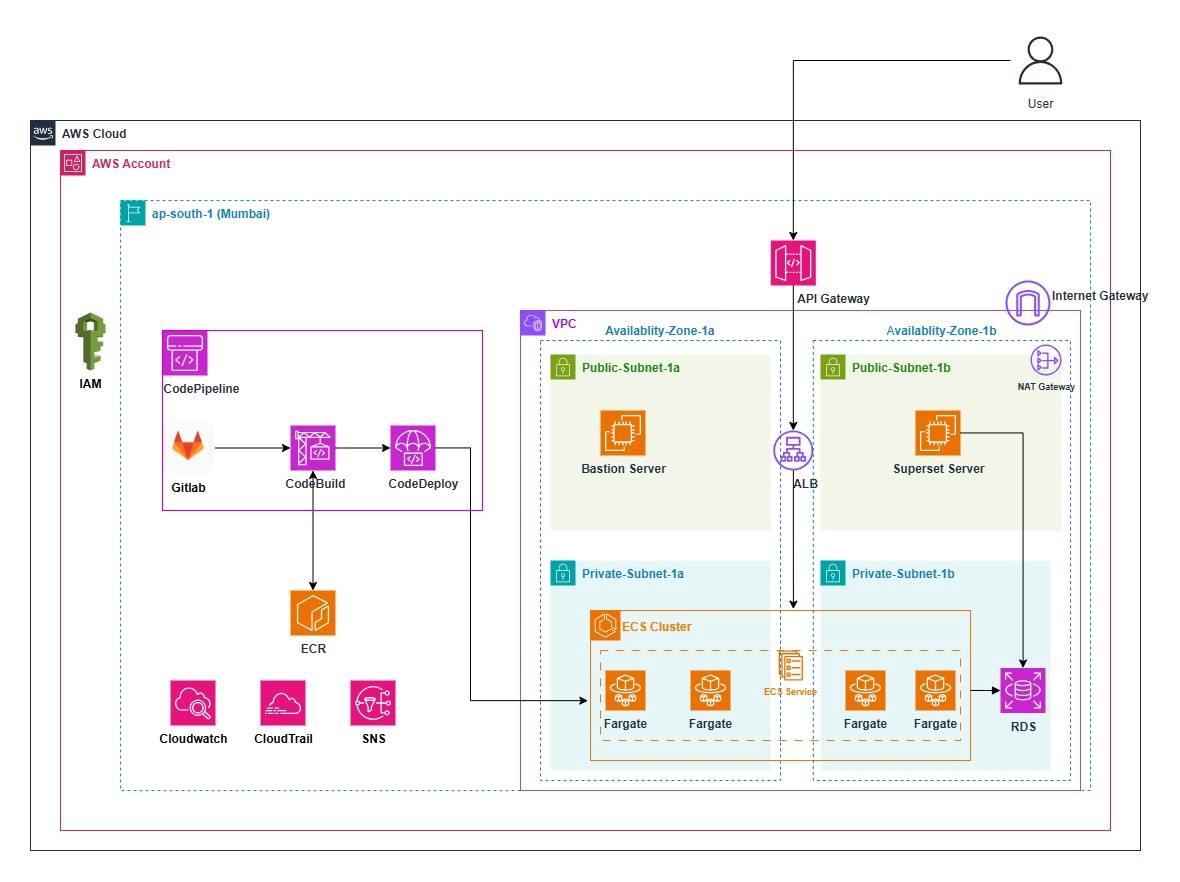

The Final Architecture

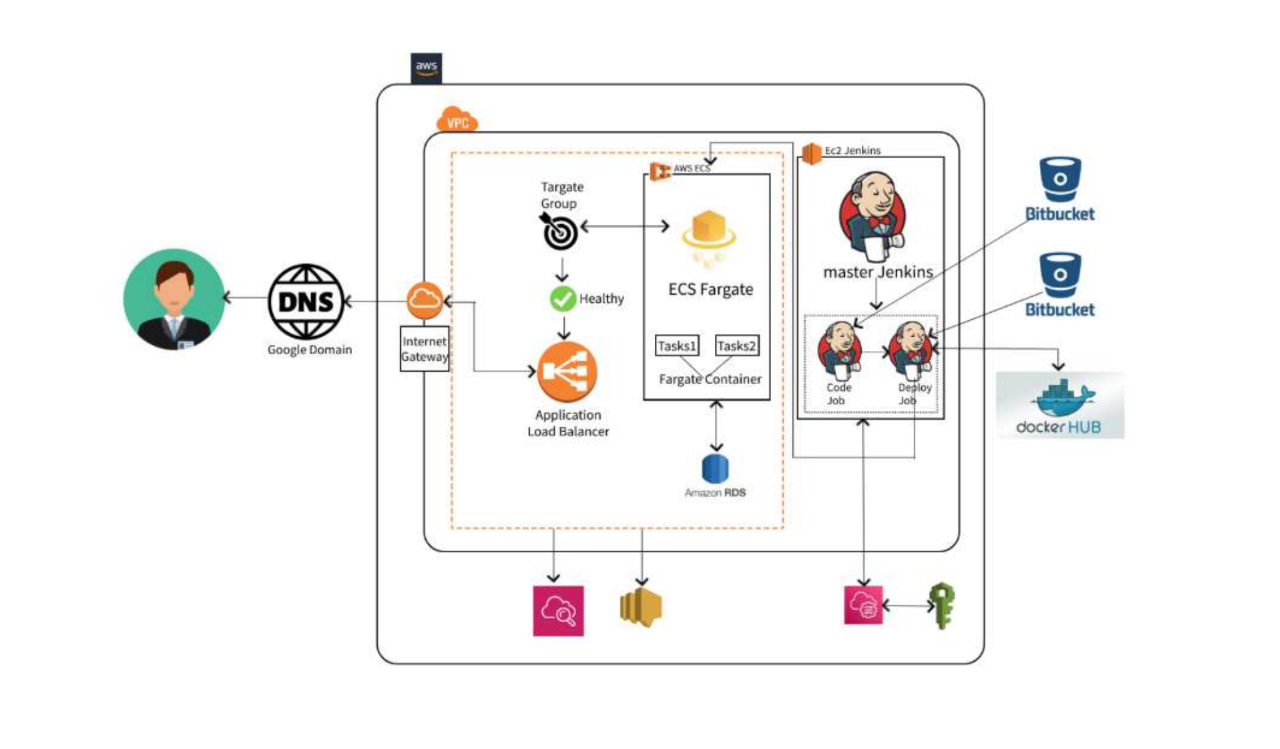

To eliminate the remaining infrastructure management overhead and fully automate the software delivery lifecycle, Cogniv Technologies migrated customer to AWS ECS Fargate a serverless container orchestration service. This final phase consolidated all prior improvements into a cohesive, production-grade, fully managed architecture.

Core Solution Components

ECS Fargate — Serverless Container Hosting

AWS ECS Fargate removes the need to provision or manage EC2 instances. Customer’s containers run in a fully managed serverless environment where AWS handles host-level patching, capacity, and scaling infrastructure.

- Blue/Green Deployment strategy was implemented via AWS CodeDeploy, enabling zero-downtime releases by running two identical environments in parallel and seamlessly shifting traffic only after the new version passes health checks.

- Deployment efficiency improved by approximately 99%, with zero downtime recorded across all production releases post-migration.

- ECS Service Discovery allows containers to communicate seamlessly within the VPC, enabling a clean microservices communication pattern without hardcoded endpoints.

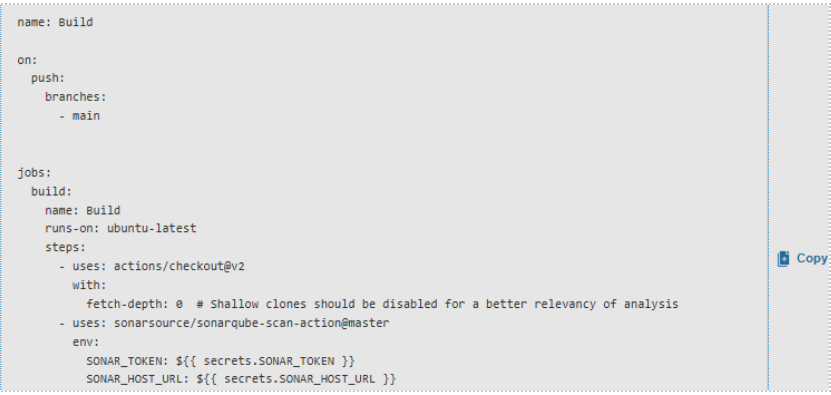

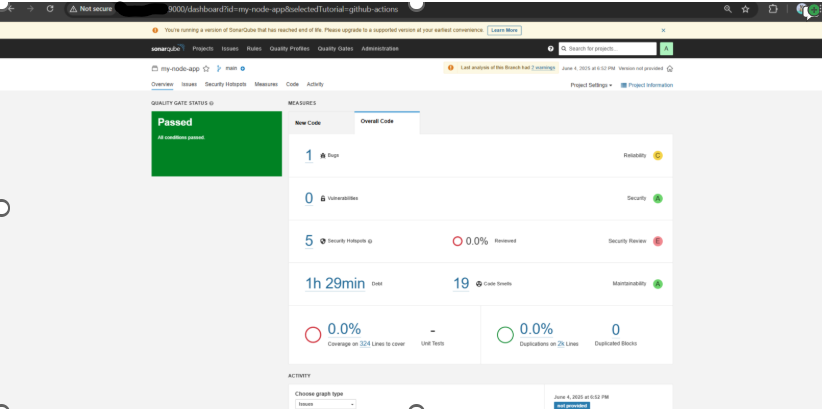

AWS CodePipeline — Fully Automated CI/CD

A complete CI/CD pipeline was built using AWS CodePipeline, CodeBuild, and CodeDeploy, automating the entire software delivery lifecycle from code commit to production deployment.

Deployment Metrics Post-Automation:

- End-to-end deployment duration: ~8 minutes from code commit to live traffic

- Engineering time saved: ~3 hours per week per engineer

- Deployment downtime: 0%

- Manual deployment steps: Eliminated entirely

AWS API Gateway — Centralized API Management

API Gateway acts as the unified entry point for all backend services, handling:

- Authentication and authorization enforcement

- Request and response transformation

- Throttling and rate limiting to prevent abuse

- Caching for improved response performance

- Centralized API lifecycle management

This replaced direct EC2/container endpoint exposure, significantly improving the security posture and providing a consistent, manageable interface to customers distributed services.

Amazon RDS — Fully Managed Relational Database

Amazon RDS for MySQL continued from Phase 2, now more deeply integrated within the VPC and ECS networking fabric. AWS manages automated backups, software patching, storage auto-scaling, and failover — freeing the engineering team entirely from database infrastructure concerns.

AWS CloudTrail — Governance and Audit

CloudTrail captures a comprehensive, tamper-evident log of all API activity across the AWS environment. Events are stored in Amazon S3 for long-term archiving and integrated with CloudWatch for real-time security alerting — supporting internal compliance, operational auditing, and external regulatory requirements.

Amazon CloudWatch — Unified Observability

CloudWatch provides centralized monitoring across all services — ECS task metrics, RDS performance, API Gateway request rates, and CodePipeline execution status. Proactive alerting ensures the team is notified of anomalies before they impact end users.

Lessons Learned

Containerization Standardizes the Delivery Pipeline

Adopting Docker and Amazon ECS introduced consistency across development, staging, and production environments. Packaging applications as immutable container images eliminated environment drift and made rollbacks trivially straightforward — simply redeploy the previous ECR image version.

Incremental Migration Reduces Risk

Rather than a single “lift-and-shift” migration, customer’s phased approach — DigitalOcean → EC2 → Docker → ECS Fargate — allowed each improvement to be validated before the next was introduced. This minimized risk, maintained continuity of client service, and allowed the engineering team to build confidence at each stage.

Automation Is a Force Multiplier

Implementing CI/CD with CodePipeline transformed how customers delivers software. What previously required hours of manual engineer effort now executes in eight minutes without human intervention. The elimination of manual steps directly reduced deployment errors and freed engineers to focus on product development rather than release management.

Serverless Containers Are the Right Abstraction for Most Teams

ECS Fargate proved that managing EC2 infrastructure is unnecessary overhead for application teams. By abstracting away host-level concerns, Fargate allowed customer’s engineers to operate at the container level the right abstraction for modern application delivery.

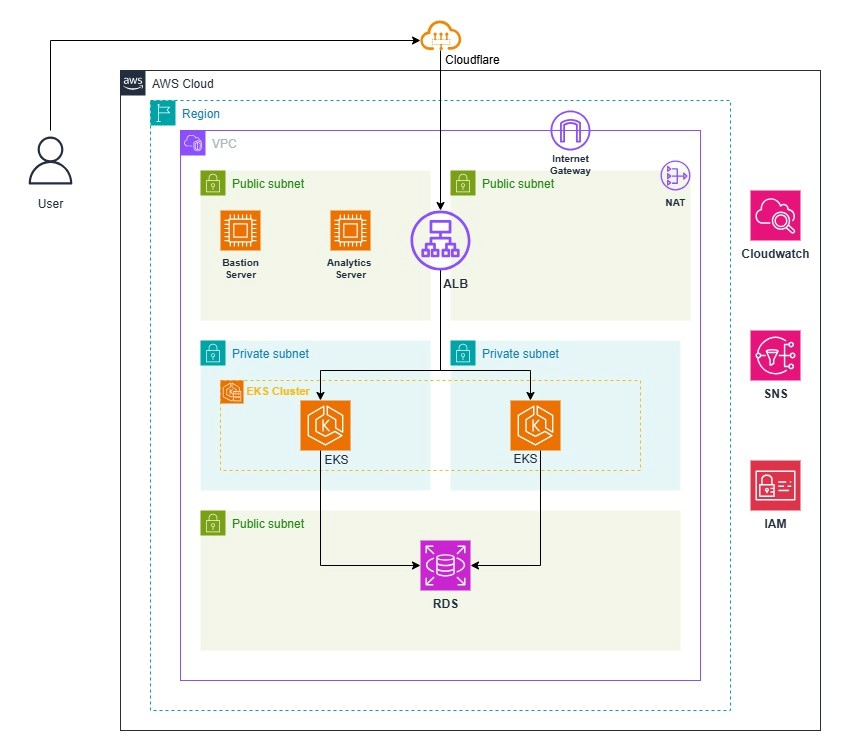

Final Architecture Diagram: